Essay

Harnessing the vibes

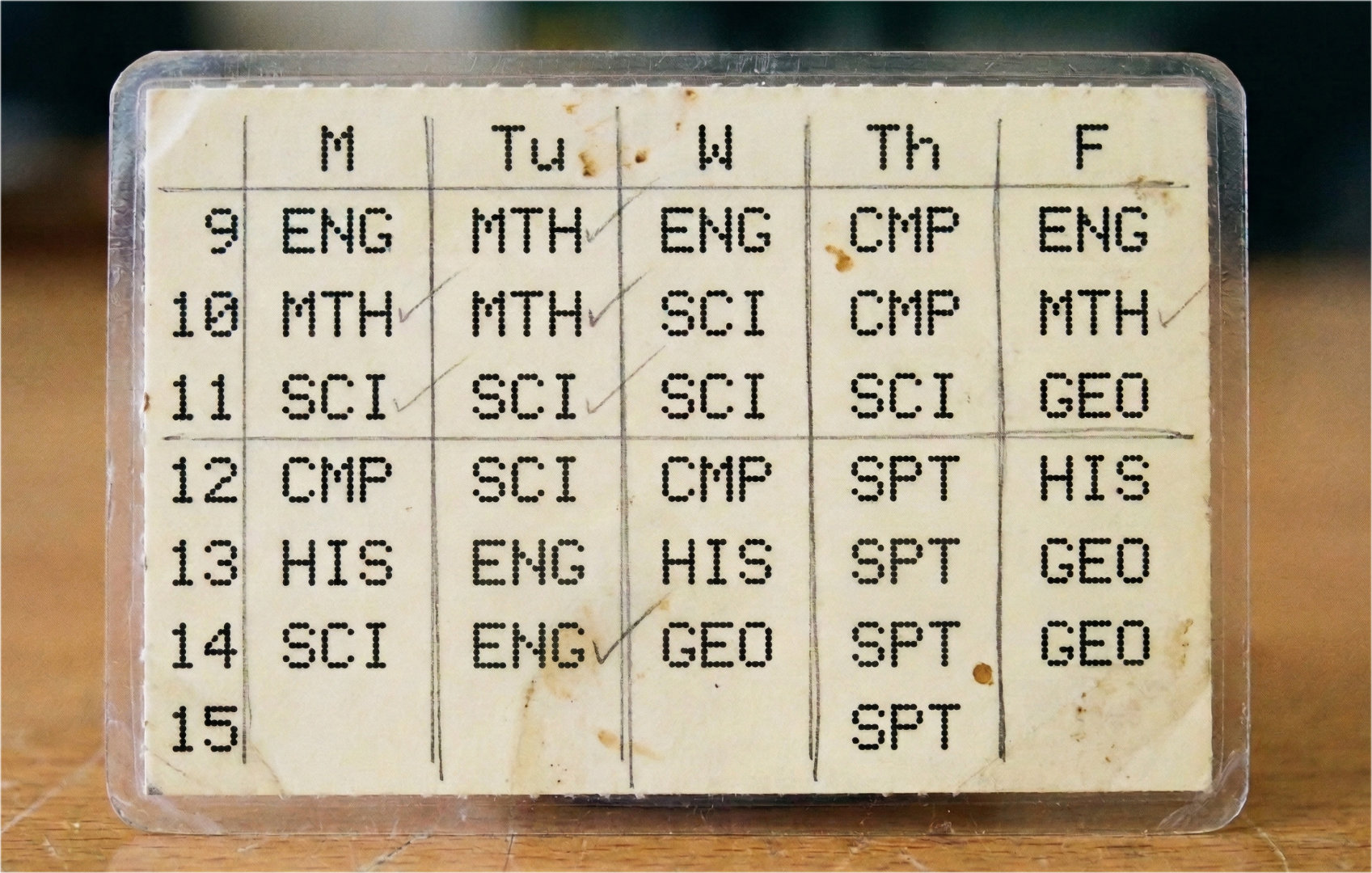

Imagine it is your first year of high school. For me, entering as a freshman was a period of massive transition and excitement, but the sheer chaos of navigating a brand-new environment was a lot for my mind to process. To help cope with it all, I wrote up a tiny pocket timetable for my classes. It became such a reliable lifeline that my friends were always asking me what class we had next, simply because they knew I could quickly pull it out and check. In a very similar vein, this is exactly what "Harness Engineering" does for AI. It acts as that strict timetable—turning a chaotic, overwhelming set of instructions into a predictable schedule. It tells the AI exactly what to do and when, simplifying the execution of complex software tasks.

Back in early 2025, the industry was obsessed with vibe coding—chatting loosely with an AI to build software without a strict plan. But just like a freshman wandering the halls without a schedule, it quickly turned into a mess in production. Letting an AI code continuously without structural boundaries led to context degradation, architectural drift, and cascading bugs. This failure gave rise to Harness Engineering. Instead of chatting, developers now build orchestration layers around coding agents. A harness manages memory, controls tools, and enforces strict phase gates. It hands the AI that pocket timetable, shifting the developer's role from writing syntax to enforcing structured agent harnesses.

Put simply: harness engineering is the infrastructure that stops an AI from guessing and forces it to follow a predictable, repeatable software development process.

The Harness Tools

If the AI model is the engine, the harness provides the steering wheel, throttle, and dashboard. Just like my high school timetable kept me from wandering aimlessly into the wrong classroom, these tools keep the AI strictly on task.

Here is a quick breakdown of the main players in the space right now, categorized by how they control the AI's execution:

- openspec (The Intent Harness): The steering wheel. It forces the AI to establish a rigid source of truth (

proposal.md,design.md) before any code is written. It locks in the exact intent and prevents the AI from drifting off-script. - gsd (The Execution Harness): The throttle and brakes. "Get Shit Done" prevents context rot by forcing the AI into tight, isolated loops: Read ticket > Plan > Code > Exit. It intentionally wipes the AI's memory clean after every single micro-task so it stays sharp.

- Gas Town (The Runtime Harness): The persistent dashboard. It stops the "yak shaving" of managing background agents. It uses

tmux, Git-backed memory ("Beads"), and a Dolt database to keep agents running continuously without losing their minds. - Chorus (The Orchestration Harness): The project manager. Built for complex epics, it uses Task DAGs (dependency graphs) and mandatory human-approval gates to keep an entire swarm of AIs organized and working in parallel.

- BMAD (The Agile Workflow): The virtual product team. Operating as a native plugin for tools like Claude Code, it uses slash commands to summon specialized personas (Analyst, Architect, Developer) and passes strictly numbered state-files between them.

To make sense of it all at a glance, here is how they stack up against each other:

| Feature | openspec | gsd | Gas Town | Chorus | BMAD (Tool / v6) |

|---|---|---|---|---|---|

| Primary Focus | Intent & Requirements | Execution & Task Isolation | Persistent Runtime Environment | Swarm Orchestration | Agile Workflow & Roles |

| Interface / Format | File-based framework | CLI wrapper | tmux & Git | UI Kanban & API | Claude Code native plugin |

| Agent Coordination | N/A (Guides the agent) | Single-agent, linear | Multi-agent capable | Multi-agent swarm | Multi-persona handoffs |

| Memory & State | Immutable markdown specs | Wipes clean after every ticket | Git-backed JSONL ("Beads") & Dolt | Sandboxed, layered memories | Numbered state/step-files |

| Core Mechanism | Spec-Driven Development | Read > Plan > Code > Exit | Background terminal sessions | Task DAGs (Dependency graphs) | /slash commands for personas |

| Best Use Case | Locking in the architectural truth | Burning through a ticket backlog | Keeping agents running continuously | Complex, multi-stage epics | End-to-end feature delivery |

| Human Involvement | High upfront (Writing specs) | Low (Trigger and walk away) | Medium (Monitoring sessions) | High (Approval gates) | Medium (Approving artifacts) |

Explorative Coding & Artifacts

The traditional software development lifecycle is slow. A customer requests a feature, a PM writes a brief, a designer creates mockups, the team writes an ADR (Architecture Decision Record) or RFC, and weeks later, an engineer finally starts coding—only to discover legacy issues that derail the whole plan.

Harness engineering enables Explorative Coding, shrinking that weeks-long cycle down to hours. In this new workflow:

- The PM chats directly with the AI harness about an idea.

- The AI generates a preliminary plan and architectural spec.

- The harness executes the plan, pushing production-quality code to a test branch.

- The PM tests, iterates with the AI, and once satisfied, hands the validated branch over to engineers and designers for final review.

This 10x speedup only works because the harness translates PM "vibes" into strict engineering artifacts before the code is written, preventing the AI from generating an architectural nightmare. Here is how the different tools support this workflow and replace legacy documentation:

| Tool | Supports PM Explorative Coding? | Artifacts Generated | Replaces Traditional Tools? |

|---|---|---|---|

| openspec | Yes (Ideal). PM chats with the AI, but it is forced to output a strict design spec before coding. | proposal.md, design.md, tasks.md | Replaces ADRs/RFCs. Keeps the "source of truth" inside the repo. Pairs perfectly with lightweight trackers like Linear. |

| BMAD | Yes (Heavy). PM talks to the /product_manager persona to generate massive documentation. | Full PRDs, Architecture Docs, Epic breakdowns. | Replaces everything. With the Atlassian plugin, it auto-generates and syncs its markdown directly into Jira and Confluence. |

| Chorus | Yes (Overkill). You could trigger a swarm to prototype, but it is built for complex engineering epics. | Task DAGs, Kanban states. | Replaces Jira for the AI. Acts as an internal PM for the agent swarm, though humans usually keep their own tracker. |

| gsd | No. It is an execution loop that expects a pre-written, well-defined ticket. | None (just code and PRs). | No. Relies on you already having tickets in a system. |

| Gas Town | No. It is backend infrastructure (memory/sessions) for agents, not a PM workflow tool. | JSONL "Beads" (memory logs). | No. These artifacts are for the AI's brain, not humans. |

Harnesses for everyone: Solo Dev to Enterprise

Not every project needs an enterprise-grade orchestration swarm. In fact, adopting heavy-handed frameworks often generates so much AI documentation that it leads to hallucinations and context rot. Here is how you should scale your harness engineering to optimize for speed, token efficiency, and actual code quality:

- Sole developer:

- The Harness: gsd (or none, if just prototyping).

- The Play: If you are just playing around, vibe code directly. But the second you want to ship, write a basic readme and let

gsdchurn through the task list. It wipes context after every ticket, keeping your AI incredibly sharp and token costs near zero.

- Small to Large teams (2-20 devs):

- The Harness: openspec (with Chorus for larger epics).

- The Play: Use

openspecto lock in the architectural "source of truth." It acts as a strict boundary for your agents. Once the team scales past 5 developers, bring in Chorus to map out those specs into Task DAGs and enforce human-in-the-loop code reviews before any agent merges a pull request.

- Enterprise teams (100+ developers in multiple teams):

- The Harness: openspec (for planning) + gsd (for execution).

- The Play: Use

openspecto generate yourdesign.mdandtasks.mdfiles, hooking cleanly into standardized trackers like Linear or lightweight Atlassian MCPs. Once the spec is human-approved, hand the task list over togsd. It acts as your execution engine, burning through the tasks in tightly isolated, fresh-context loops. This hybrid approach guarantees architectural compliance while completely eliminating AI context rot.

Note: You might notice we left out heavy tools like BMAD and Gas Town. When scaling AI, Developer Experience (DX) and team onboarding are critical. You need small workflow changes that deliver immediate benefits, rather than forcing teams to learn massive, overly opinionated frameworks. Tools likeopenspecandgsdprovide these quick wins without drowning your engineers and agents in experimental boilerplate.

Our recommendation of openspec also comes down to traceability. It generates a succinct, version-controlled source of truth (like delta specs) rather than over-the-top boilerplate. If an issue arises, you can easily reverse-engineer the agent's exact intent to pinpoint the problem, ensuring you aren't accidentally modifying code that should remain untouched.

When it comes to traditional planning documents like ADRs (Architecture Decision Records) and RFCs, here is how each tool supports or replaces them:

- openspec: Replaces formal ADRs/RFCs entirely. Its lightweight

proposal.mdanddesign.mdfiles act as the live, succinct architectural record directly inside your repository. Because these files are so structured and clean, they can be fed into a standard AI agent to generate a formal, high-fidelity ADR or RFC in seconds if your organization still requires them for compliance. - Chorus: Features dedicated PM agents that natively draft, review, and link formal ADRs and RFCs directly into its task dependency graphs.

- BMAD: Can generate massive, highly detailed ADRs and RFCs via its Architect persona, though this often results in bloated, text-heavy documentation.

- gsd & Gas Town: Neither tool generates or aids in creating ADRs or RFCs. They are strictly focused on task execution and persistent runtime environments, not planning.

The Pocket Timetable for Enterprise AI

Looking back at my first year of high school, that tiny pocket timetable didn't just tell me where to go; it gave me the confidence to navigate a complex system without feeling overwhelmed. That is exactly what harness engineering is. It is the infrastructure of constraints, feedback loops, and strict phase gates that forces an AI to follow a predictable schedule. As the industry matures, the chaotic "prompt-and-pray" era of vibe coding is rapidly being replaced by harness engineering precisely because enterprises cannot ship products on vibes. They require guaranteed predictability.

So, how can an enterprise best use this to generate artifacts that actually get used, rather than just piling up bloated AI-generated documentation? The secret is to keep them succinct yet "contextful." By using a lightweight stack—like Linear for ticketing, openspec for architectural intent, and gsd for execution—teams create highly focused, version-controlled delta specs (design.md, tasks.md). These artifacts are small enough for a human to read quickly, but rich enough to give the AI the exact boundaries it needs to act without hallucinating.

This brings us to the ultimate question: So how can Enterprise teams adapt such tools? And does the traditional style of workflow still make sense?

Harness engineering doesn't replace your team; it gives them the schedule and structure needed to successfully orchestrate an AI workforce. It is time to stop chatting with AI, and start managing it.